Serverless Computing does not mean that servers are no longer involved, but servers are managed less; it is a technology which allows developers to deploy and run code without having to worry about the process involved regarding the server, which is totally cool. A developer need not have to worry about the infrastructure but only on the application.

To go through the evolution, the various stages of Serverless computing can be depicted as the stages of cloud computing from Infrastructure-as-a-Service(IaaS) to Platform-as-a-Service(PaaS) to Function-as-a-Service(FaaS). As the role of Infrastructure-as-a-Service(IaaS) is to separate the concealed infrastructure to provide virtual machines for ready utilization and Platform-as-a-Service(PaaS) to provide application platform, separates the middleware layer and the operating system involved. Whereas playing a fundamental role in the process, Function-as-a-Service(FaaS) goes one step further by abstracting the entire programming runtime to provide options to readily deploy a piece of code and execute it without worrying about the deployed infrastructure. Let us consider the application of serverless computing with an example.

Serverless Computing Evolution

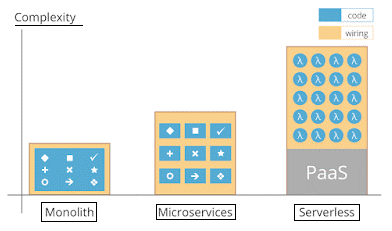

The need for greater agility and scalability lead to the evolution of Serverless computing, going through various phases from,

monolithic —> microservices —> serverless architecture(FaaS)

Here microservice architecture came into action by functioning as a key practice for providing the development team with flexibility and other benefits like, the ability to hand over an application at a flash by using Platform-as-a-Service(PaaS) and Infrastructure-as-a-Service(IaaS) environment. In the case of a monolithic architecture, even a single defective service can bring down the entire app server along with the services running on it.

Some of the applications of microservices is that can be written in different programming languages and it can be scaled and deployed separately. But a crucial situation that many organizations face when deploying their microservices architecture is choosing between IaaS and PaaS environments.

Serverless computing is slightly like microservices, wherein the case of microservices, a developer would assemble application and call services from function but in Serverless computing, the developer can grab function from a library.

Advantages of Serverless Model

The Serverless model has an extensive advantage compared to their earlier layer of the architecture. Some of the advantages are listed below.

- Choice of technology – Different languages are available for different functions that suit the developer.

- Never pay for idle – No cold servers will be maintained hence you will not need to pay anything if there were no traffic. When the traffic starts servers can be generated between milliseconds. Hence you only pay for what you use.

- Team responsibilities simplified – Complex and time absorbing discussions and intercommunication will occur between different teams working on different parts of a complex application. With the clearly defined borders in APIs, teams will be able to collaborate and communicate in a well-versed method.

- Offloading infrastructure worry – Infrastructure in serverless computing refers to Function involved. Managing them itself can be certified as “Infra-Management”. Service providers involved in the operation would deal with it so that the developer does not have to worry about the process.

Disadvantages of Serverless Model

Though there are lots of advantages over disadvantages, Serverless model suffers from minor drawbacks compared to their architectural ancestors.

- complexity regarding architecture – Consumers might face issues revolving architecture, even though when they mix and match with that package. It will not be an elegant ride managing different parties concerned and different architectures

- Privacy and multi-tenancy – As most of the function run in the same server for different customers, there is a chance for exploitation by attackers through any security loophole in the system.

- Less control – The consumers would have less control over the whole process as the services managed in the cloud belong to third-party service providers. We might have to stick to the vendor assigned initially as the process of moving to a new platform is costly.

What is new about Serverless?

Let us consider a real-time example where a Federal Communication Commission(FCC) website broke down when it was unable to handle critiques about net neutrality. This is where serverless would come into action and would make an actual change in scenario-the scale of traffic generated could have been handled effectively if the FCC had used a serverless platform. This example explains the assistance of elasticity and cloud-bursting to handle larger capacity sites.

The aspect which makes serverless attractive is the cloud offering an environment that assists artificial intelligence and middleware that integrates flawlessly with the serverless platform. The adoption of such service not only enables application dependency on service provider environment and vendor lock-in-which but also provides another revenue stream for a cloud provider.

Characteristics

The serverless platform is distinguished by many characteristics and hence while choosing a platform, a developer should be aware of it. Some characteristics of Serverless computing are listed below,

- Cost: In the case of Serverless computing, users pay only for what they use which corresponds to the time and resources when the serverless function is active. The resources that are estimated, such as the pricing model, memory or CPU, vary among various service providers.

- Programming languages: JavaScript, Java, Python, Go, C#, and Swift are the variety of programming languages supported by Serverless services. While most of the service providers support more than one programming language. Some of the providers are limited to only one language.

- Monitoring and debugging: Added capabilities are provided to help developers to detect errors, find bottleneck etc. Every single platform which supports debugging is recorded in the execution log.

- Deployment: Deployment is made as simple as possible by platforms where the developer must hand over a file with the function source code. It can also be packaged as an archive with multiple files stored in it and the service providers would take care of rest of the issues as simple as that.

Security Aspects

Here, the developer is not accountable for ensuring the security management of servers. It is the responsibility of the service provider to do so, but it requires the user to entrust the provider with the responsibility of securing their infrastructure.

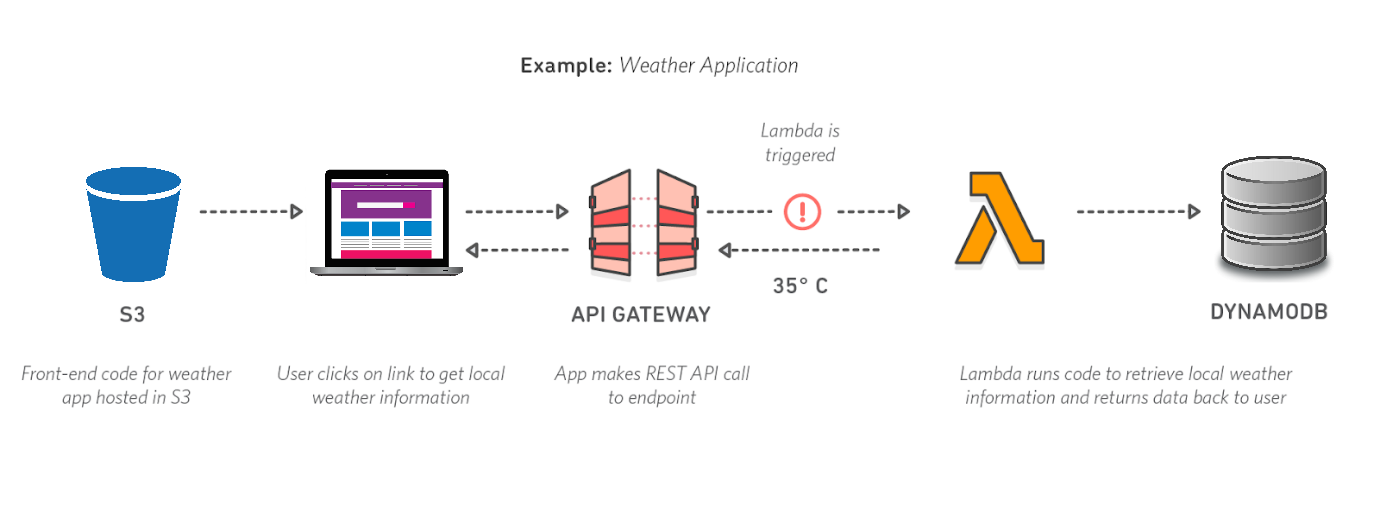

Almost all service providers restrict access to functions through authorization such as roles and policies. To distinguish them, each of them is facilitated with a key or other security credential. Through this method, unique security entity will be provided to a function. Functions can be invoked by employing a gateway.

Serverless Providers

Some of the pay per use model serverless providers are listed below

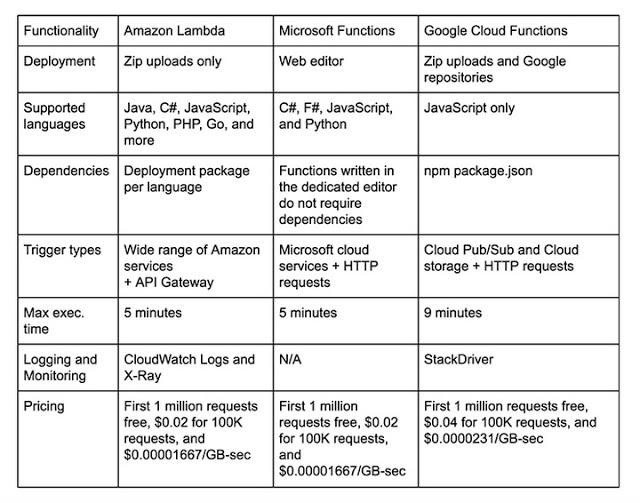

- Microsoft Azure Functions: Microsoft introduced itself into the serverless environment through Azure function following the trend of serverless computing. It allows you to run your custom code on demand without having to worry about where its running or its scalability. Moreover, the runtime of the function is open-source. Microsoft Azure functions provide 400,000 gigabyte-seconds of computing time and 1 million requests which are free of cost each month like AWS lambda. Other than that, following the pay per use model, the customer is debited for what he consumes.

- AWS Lambda: Amazon offers a serverless computing service called AWS Lambda. In this model, the duration is calculated from the time the execution of the code begins until it terminates. The costs for transferring data are also shown in the table above. The expenses are based on the number of bytes for outgoing data transfer.

- Google Cloud Functions: Google Cloud Functions support Bitbucket or GitHub as the primary repository for deploying functions. Google Cloud Functions charge the customers for CPU usage in addition to the amount of invocation and allocated memory. Based on function invocation, the allocation of CPU clock cycle may vary.

Conclusion

Serverless platforms certainly prove to be a game changer in the current century. Here rather than very low latency, high-throughput plays the key role. The financial aspect of presenting tasks in a serverless environment make it a convincing way to reduce hosting costs considerably and to increase the pace of time to market for transmission of new features.